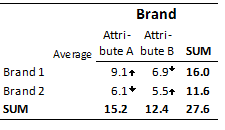

The table below shows that Brand 2 has a higher score for Attribute A than it has for Attribute B. However, the arrows show that 6.1 is significantly lower, which seems counterintuitive. However, there are two key things to appreciate about this table:

- It is a very unusual table. That is, it is not a crosstab of two separate questions. Instead, each cell in the table constitutes a separate variable.

- Most of the time, the obvious patterns in tables like this are uninteresting. Note that Brand 1 has higher scores on both attributes than Brand 2. Also, note that Attribute A has higher scores for both brands than Attribute B. If the testing simply compared differences within rows or columns, the resulting statistical test would, in most instances, be uninteresting. Consequently, Displayr's automatic significance testing focuses on identifying more interesting patterns than those obtained by comparing rows or columns.

A standard analysis approach for grids of this type is to standardize the table, removing row effects (i.e., situations where one brand is consistently higher than the other) and column effects. In the context of categorical data, this is sometimes referred to as chi-square residual analysis or chi-square standardization. The approach that Displayr uses for dealing with Binary Grids is both different from the approach used for Numeric-Grids and from standard practice.

Numeric - Grid

The way that Displayr has computed the 6.1 as being significantly high in the example above (upward arrow) is:

- It has computed an expected average for Attribute A > Brand 2 of 6.4. It has done this by multiplying the row SUM by the column SUM and dividing by the overall SUM (i.e., 6.4 = 11.6 * 15.2 / 27.6).

- It has then conducted a statistical test comparing the observed average of 6.1 with the expected average of 6.4 and concluded that the observed value is significantly beneath the expected value at the 0.05 level of significance.

Now for a more intuitive explanation. Notice that all Attribute 1 scores are greater than the Attribute 2 scores. For brand 1, the difference is 2.2. For brand 2, the difference is 0.7. So, the average difference is 1.4. Thus, the difference of 0.7 is below the average difference, and as this difference is statistically significant, it is marked with a downward arrow.

Binary - Grid

The standard practice for computing expected values on a Binary Grid is to use the method described for a Numeric Grid. This standard practice is derived from the mechanics of the chi-squared test of independence. However, this test explicitly assumes that the row and column categories are mutually exclusive (i.e., are Binary-Grid questions), and this assumption is always violated with Binary - Grid data, as Binary - Grid categories are, by definition, not mutually exclusive. For example, looking at the table above, we can see that 65% of people said that Coke was Older, and 38% said that Pepsi was Older, but the NET is 74%, and thus 29% must have said that both Coke and Pepsi were older (i.e., and thus they are not mutually exclusive).

To reduce the effects of this problem, Displayr computes the expected values using a log-linear model, with main effects for rows and columns. That is:

- A binary logit model is estimated.

- The table is flattened and stacked. In the example above, with 30 cells, the resulting model is estimated using 60 observations (i.e., two per cell in the matrix, where one corresponds to people who selected the option and the other to those who did not).

- A frequency weight is employed, whereby:

- The weight is the proportion of observations multiplied by an estimate of the median effective sample size for the entire table. In the example above, Coke and Feminine thus have weights of 6%*327 = 21 for the cell representing people who selected Coke as Feminine, and 306 for those who did not.

- In cells containing NETs, the weights are further multiplied by 0.01 to limit the potential bias of including such cells in the analysis. Note that while in a Numeric - Grid it is appropriate to exclude such cells as they contain no additional information (i.e., are just the sum of the other results), in a Binary - Grid these cells do contain information that cannot be ascertained from the other cells on the table.

Comments about expected values on grid questions

- As best as we can determine, the question of how to compute expected values on grid questions has never been discussed in the sensible sections of the academic literature (i.e., where discussions do appear, they are demonstrations of the standard approach and do not contain any discussion of the issue of the violation of assumptions caused by the structure of Binary - Grid data).

- We have evaluated our approaches on many examples, and they seem to both generally work and, in the case of the Binary - Grid approach, outperform the standard method; our internal testing has not identified any instances where there are large differences between the conclusions derived from our approach and from the traditional approach.

- The approaches employed by Displayr are entirely ad hoc. Neither approach can be proved to be optimal.

Alternative approaches to computing statistical significance on grids

You can get Displayr to do paired tests comparing the actual values in the columns directly. There are two different ways of doing this:

- Change Show significance to Compare columns.

- Select the cells you want to compare and conduct a Planned Test of Statistical Significance.

UPCOMING WEBINAR: Run Research Your Way With AI Skills

UPCOMING WEBINAR: Run Research Your Way With AI Skills